TL;DR

Start with outcomes, not tools. Define what deserves an alert before evaluating any platform

Monitoring, listening, and analytics are three different functions; conflating them creates alert fatigue and ignored dashboards

The signal vs. noise filter is the skill most teams skip and the reason most monitoring setups fail quietly

Tool selection comes after defining your use case, not before

Later connects monitoring insights to planning and performance, so the signal actually leads somewhere

Table of Contents

- TL;DR

- Why social media monitoring matters more than ever

- Social monitoring vs social listening vs analytics

- Start with outcomes, not tools

- What signals and noise look like in monitoring

- What to evaluate in social media monitoring tools

- What good social media monitoring looks like in practice

- How to run a smart pilot so you pick the right tool

- Build a repeatable monitoring cadence

- Turn monitoring insights into action with a simple loop

- Conclusion: build a system you'll actually use and turn signals into action

There's a specific kind of brand crisis that doesn't start with a press release or a major incident. It starts with a Reddit thread, or a TikTok comment section, or a cluster of tweets that nobody on the marketing team saw until a journalist quoted them.

By the time it surfaces in a Google Alert or a weekly report, the conversation has already developed a shape. People have formed opinions. The narrative has a direction. And the brand is playing catch-up instead of responding in real time.

Social media monitoring is the practice that closes that gap. Not perfectly, and not without effort, but consistently enough to move a team from reactive to aware. The difference between a brand that responds to a conversation and a brand that shapes it is often just a few hours of lead time and a monitoring setup that was actually built to catch something.

This guide covers how to choose the right tool, what to look for during evaluation, and how to build a system that turns signals into decisions, not just dashboards nobody opens.

Start with outcomes, not tools

The most common monitoring setup failure isn't a bad tool choice. It's buying a tool before defining what success looks like, then spending months staring at alerts that don't map to any decision.

Before evaluating any platform, write down the specific problems the team needs monitoring to solve. Then evaluate tools against those problems, not feature lists.

Copyable outcome statements worth defining upfront:

Protect brand reputation by detecting risk early and responding within a defined time window

Capture customer feedback themes to inform content, product, and CX decisions

Spot trend opportunities so content planning moves faster than competitors

Reduce response time for complaints and questions that have escalation potential

Track competitor narrative shifts and product launches as they happen

The reason tool-first buying fails is that without outcome definition, every tool looks like it solves the problem, because every tool covers mentions, keywords, and sentiment at some level. The differentiation only becomes visible when testing against a real scenario with a specific outcome in mind.

Alert fatigue, the state where monitoring dashboards exist but nobody opens them, is almost always the result of buying a tool before defining what deserves an alert.

What signals and noise look like in monitoring

This is the skill most teams don't build explicitly, and the reason most monitoring setups slowly stop being used. When everything generates an alert, nothing gets acted on.

Noise looks like: one-off mention spikes with no ongoing conversation attached. Irrelevant keyword matches from broad queries that weren't scoped tightly. High volume from bot activity, giveaway participation, or spam that doesn't reflect real audience behavior. Impressions without meaningful engagement or purchase intent.

Signal is the opposite: contextual, repeatable, and action-worthy. A signal is a mention or conversation cluster that indicates something real, a shift in sentiment, an emerging complaint theme, a competitor move gaining traction, or a cultural moment the brand could participate in. Signal has a "what next" attached to it.

The three-question filter for every alert worth escalating:

What happened? Describe the spike or conversation without interpretation

Why does it matter? Explain the potential impact, brand risk, opportunity, and audience insight

What do we do next? Assign an action, even if that action is "monitor for 24 hours."

Teams that document their decisions consistently, what they responded to, what they ignored, and what happened as a result, build compounding institutional knowledge. The team six months in is significantly better at signal triage than the team on day one, but only if they kept records.

How to run a smart pilot so you pick the right tool

A 2 to 4 week pilot with real scenarios is the only reliable way to evaluate a monitoring tool. Feature demos show what the tool can do. A pilot shows whether it catches what your brand actually needs to catch.

Building the monitoring foundation for the pilot:

Start with brand and product keywords, including common misspellings and abbreviations. Add executive or spokesperson mentions if relevant. Layer in competitor tracking for launch announcements and narrative shifts. Include category keywords that indicate buying intent or dissatisfaction, conversations that happen around the product category, even when the brand name isn't mentioned.

Test scenarios worth running:

A negative spike and escalation simulation — create a test scenario and see how quickly the tool surfaces it and how cleanly the escalation path works. A competitor launch mention surge — does the tool catch competitor activity at useful velocity? A product issue theme detection test — can the tool cluster related complaints, or does it surface individual mentions without connecting them? A campaign-related conversation lift — does the tool accurately attribute mention increases to brand activity?

The pilot scorecard:

Evaluate across four dimensions: data quality (are the results accurate and relevant?), time-to-signal (how quickly does the tool surface something that matters?), usability (can the team operate it without significant training overhead?), and stakeholder trust (do the people who need to act on the alerts believe the data?). Stakeholder trust is underrated, a monitoring tool that produces alerts, but people doubt it gets used.

Build a repeatable monitoring cadence

A monitoring tool is only as valuable as the cadence it supports. Without a defined routine, alerts accumulate, get ignored, and the tool becomes a subscription nobody logs into.

Daily: Review alerts from the previous 24 hours. Triage by the three-question filter. Respond where required. Tag alert themes so patterns can be identified over time.

Weekly: Review the tagged themes from daily triage. Identify patterns that weren't visible day-to-day. Update keyword exclusions to reduce noise that keeps appearing. Create one action for the following week based on what monitoring surfaced.

Monthly: Validate that weekly patterns represent real trends. Report on decisions made based on monitoring signal and what the outcomes were. Adjust the strategy — update queries, add new tracking angles, and retire monitoring that's no longer relevant.

Ownership model: Define clearly who owns query management and keeps the setup current, who owns first response for escalations, and who owns reporting output for leadership and cross-functional teams. Ambiguous ownership is the second most common reason monitoring setups quietly stop being used.

Turn monitoring insights into action with a simple loop

Monitoring that doesn't lead to action is expensive noise management. The signal-to-action loop is what makes a setup actually valuable over time.

When a signal clears the three-question filter, convert it into a one-variable hypothesis before acting. The clearest categories:

Response style — does this signal suggest the current community response approach isn't landing?

Content format — is there a conversation theme the content calendar isn't addressing?

Hook and messaging — is there language the audience is using that content isn't reflecting?

Creator partnership angle — is there a category conversation where a creator relationship would add credibility?

Track the outcomes of every action taken from a monitoring signal: what triggered the action, what was changed, what moved and by how much, and what to repeat or stop doing. This compound learning is what separates teams that get better at monitoring over time from teams that run the same setup indefinitely without improvement.

Monitoring insights connect most clearly to three downstream workflows: content planning (what the audience is talking about should inform what gets created), community management (escalation paths and response playbooks), and CX (complaint themes that surface in monitoring should reach product and service teams, not just social).

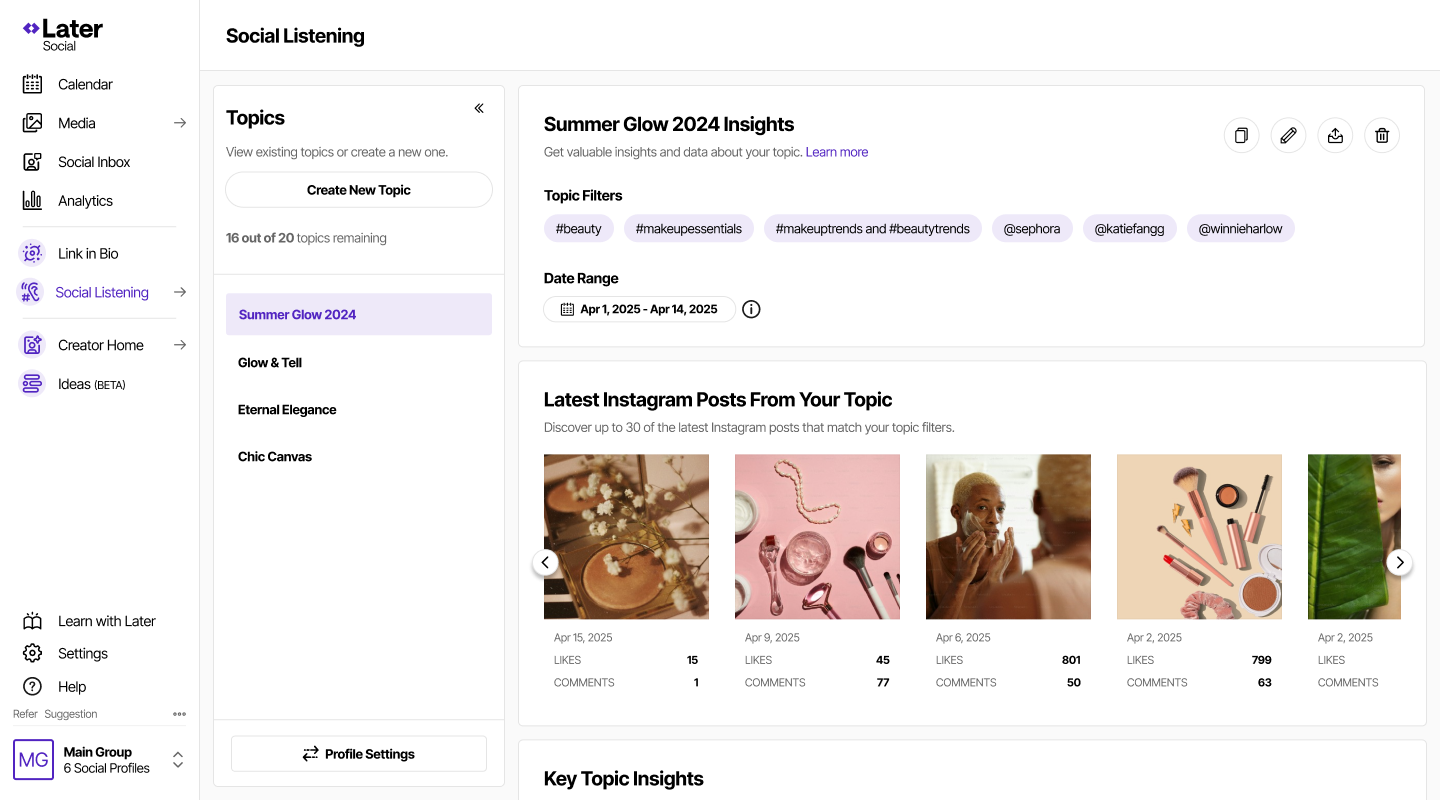

When monitoring insights connect directly to planning and performance reporting, the signal becomes significantly more valuable. Later's end-to-end social workflow is designed to make that connection, so what the monitoring surfaces becomes part of what gets scheduled, measured, and iterated on.

Conclusion: build a system you'll actually use and turn signals into action

The framework that works is consistent: start with outcomes, define what signal looks like before configuring anything, validate spikes with the three-question filter, build a cadence with clear ownership, and close the loop by tracking what monitoring-driven decisions actually produce.

The right monitoring setup is the one that catches the signals your team defined as important, integrates into the workflows your team already uses, and produces data people trust enough to act on. For social teams managing Instagram, Later's monitoring connects that signal directly to content planning, scheduling, and analytics, so what surfaces in monitoring becomes part of what gets created, published, and measured.

Ready to stop finding out about conversations after they've already shaped the narrative? Start with Later and build the monitoring cadence before the next conversation starts without you.

Social monitoring vs social listening vs analytics

These three functions get used interchangeably in tool marketing, which creates real confusion when teams are evaluating platforms. They're related but do different jobs, and building a stack that confuses them creates gaps and redundancy.

Monitoring is real-time and reactive. Alerts, mentions, spikes, and response workflows. The question it answers: what's being said right now, and does it require a response?

Social listening is longitudinal and analytical. Themes, sentiment direction, and the drivers of conversation over time. The question it answers: what does the pattern of conversation tell us about how people feel about this brand, category, or topic?

Analytics is performance measurement for owned content. The question it answers: how did our posts perform, what drove engagement, and what should we do differently?

The decision map: PR and comms teams need monitoring most because speed and escalation paths matter. Marketing teams need listening because they're making decisions about content strategy and positioning. Leadership and CX teams need analytics because they're measuring outcomes and informing product and service decisions.

A brand dealing with a reputation risk needs monitoring first — real-time alerts, escalation workflows, and fast response loops. A brand trying to understand why category sentiment shifted over the last quarter needs listening. A brand reporting on campaign performance needs analytics. Using a monitoring tool to answer listening questions produces noise. Using a listening tool to manage a real-time crisis produces lag.